Julie and I have very different understandings of the 5 star rating system. Here’s what usually happens at a restaurant:

(Kevin nosily scrapes the last bits of food from his plate using his finger as a backstop instead of his knife)

Kevin: So how would you rate this restaurant?

Julie: I liked it! Maybe four or five stars?

(Kevin gives Julie a look of horror and confusion)

Julie: Oh, maybe more like three and a half stars

Kevin: Yeah, I was thinking three or four stars

We then (mis)remember how we rated other restaurants and try to slot this meal against those ratings. This process is haphazard at best.

And it’s not just restaurants: I’m consistently more critical than Julie on movies, books, and recipes. However, rather than accept that I am just a negative person, I instead embarked on an empirical study to prove that rating systems, not I, are the flawed party.

Book Reviews on Goodreads

The most reviews I have in one place is on Goodreads, a site where you can both keep track of what you have and want to read as well as stalk your friends’ bookshelves. Not only is it my favorite social media platform, it also is home to my cultivated book rating system.

Here’s my general scale for rating books:

5 Stars. This book changed my life. I probably blogged about it, and Julie basically read it because she listened to my unabridged version (with scintillating commentary) for at least a week

4 Stars. I loved this book and would recommend it to others. This is the cap on most genre fiction that I read since I love fantasy and sci-fi, but I probably won’t rave about random books.

3 Stars. I enjoyed reading this book roughly as much as any other book. I am glad I read it but have had a similar experience with many others.

2 Stars. I would not recommend this book to others.

1 Star. This book was a waste of time, and I wish I hadn’t read it. In fact, there’s a good chance that I didn’t finish it, so I guess I fulfilled that wish.

I acknowledge that the scale has some gaps: I might give a book a five but not recommend it because it spoke to me in a particular way that others may not experience. However, these gaps make it easy to classify books and not change my mind with time and reveries.

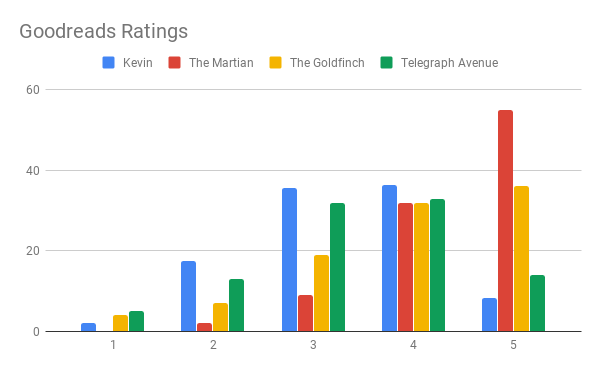

However, I noticed on Goodreads that most global average ratings are pretty close. If a book has above 4.2, it was probably a really good book. If it was below 3.8, it probably wasn’t too good. Everything else is somewhere in that 3.8-4.2 range, and that’s not very much of the scale. To see how that happens, I compared the distribution of my ratings to the distribution of everyone’s ratings for a few books. We have The Martian with a 4.4 (good), The Goldfinch with a 3.89 (average), and Telegraph Avenue with a 3.37 (really bad).

Oddly, the closest distribution was Telegraph Avenue, and that was a really bad book (according to Goodreads). For reference, my average rating is 3.31.

So is my system wrong? Well, it’s clearly different. My reviews look more like a normal distribution. The skew to the right is probably because I read books that I think are worth my time.

Restaurant Reviews on Yelp

So far, evidence points to me being a negative person. Let’s see how other data looks. Yelp is a very popular service review website (at least here in the US and particularly in the Bay Area). I also have written a fair number of Yelp reviews. My system is:

5 Stars. This place was AMAZING. I can remember exactly what I ate there and talk a long time about what made it so special.

4 Stars. Great meal!

3 Stars. This meal was fine. I enjoyed it but would have enjoyed the next place just as much.

2 Stars. I would not come back. Something probably went wrong.

1 Star. Stay away at all costs. Actually, that’s what I think it means. I don’t actually know because I have never given a 1 star review.

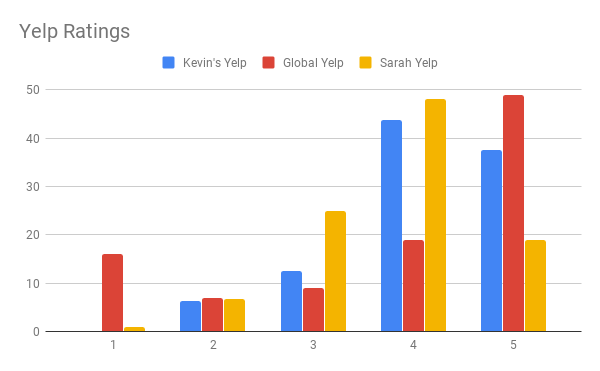

Here is a comparison of Yelp ratings between myself, all of Yelp, and my friend Sarah who did not know in advance that I scraped her data for personal blog use:

On this graph, my ratings look more typically favorable like most reviewers. The biggest difference is that I never give ones. Instead, those appear as fours. Here’s my guess why.

Many people write Yelp reviews about particularly bad experiences at restaurants. Specifically, the worst reviews typically involve either bad service or food poisoning. I luckily haven’t gotten food poisoning from a restaurant: all my food poisoning is my own fault from keeping leftovers too long. I also haven’t had service bad enough that I needed to share that experience with others online. Maybe I’m lucky and just get treated really nicely.

For the fours, I generally like restaurant food. At first, I thought we just only go to good restaurants because we always check Yelp first. However, that is true for books as well, and I am still tougher on books.

My second guess is that I have a lower baseline. Eating out is special for us. Compared to our peers, Julie and I don’t eat out very much. The professionals cook more and better than I do at home, so I would rate it accordingly.

Also, I wrote many Yelp reviews while traveling. We probably carefully researched our meal itinerary and were on a vacation-high. I haven’t broken down my reviews by location, but I suspect that my local reviews would look more normal than my vacation reviews.

Ride Sharing Reviews

Of course, I do use other systems as customary where it matters. I think that the Uber and Lyft system is absurd: as best as I can tell, the only appropriate rating to give is a five. Anything less than a five is a major faux pas, like the driver farting, setting the AC to “re-circulate”, and blaming me for it. And that’s probably a four at worst.

Rating are too important to the livelihood of these drivers, and I don’t want to hurt drivers with my review. I would rather provide a non-answer with a 5 than to blackball the driver. However, a scale where the highest rating means “fine” is definitely odd.

Final Thoughts

The 5-star review system is the standard for internet reviews, but it could always change. Older sites such as IMDb or BoardGameGeek still use 10-point scales and prominently feature the average there. And Netflix notably switched from 5 stars to thumbs up and down due to some confusion about how to use it.

Some sites explain what the stars should means, people don’t read that stuff. Instead, we all use our own rating system, and that all gets mashed together into an arithmetic mean.

Even if we are all inconsistent, the results are useless. We all agree that more stars are better, and with enough ratings, you can tell the difference between a three star restaurant and a four star restaurant. That four stars may mean something completely different on another website, but we learn how to interpret the ratings differently for, say, Yelp versus TripAdvisor.

I started writing Yelp reviews because I used the site so much and wanted to give back. However, I haven’t written many reviews in the past year. Among many other reasons, I didn’t write travel reviews and stopped taking so many food pictures.

In contrast, I still ardently write Goodreads reviews. They help me reflect on what I just read. They make me feel accomplished to count up at the end of the year. And they help me remember what books to recommend.

So although online reviews are ostensibly for sharing and aggregating experiences for other people, I mainly write them for myself. And that makes me feel pretty good about using my own system for assigning stars.

2 replies on “My Understanding of the 5-star Rating System”

This post gets me thinking about a lot of my work and the current understanding of online reviews.

There is this assumption that a star rating is based on how survey question measures are constructed. For example, 5 = very positive, 4 = positive, 3 = neutral, 2 = negative, 1 = very negative. When I teach my students about constructing proper scales for their surveys, they often make the mistake of imbalance valence. Say there are 5 choices, they’ve 3 that are positive, 1 neutral, and 1 negative. It biases the metric. So, clearly, those who research on online reviews are well aware of the balance of scales, and they (we) assume consumers have that in my by default.

Based on what you said, you have 3 as “somewhat positive” rather than neutral. When you think about it, you are not THAT negative, because you simply put things on the lower end of the positive range. But it shows that you are more negative than average quantitatively.

You also gave me a research idea. The existing knowledge suggests reviewers are affected by other reviews for various reasons. For example, reviewers tend to adjust their rating more negatively when others are negative, so that they appear to be more differentiating and intelligent. But few mentioned about how reading reviews affects your expectation, which in turn affects your evaluation of the product. A simple experiment comes to mind: Have half of the participants read a positive review and the other half read a negative review. Then, let them experience the same product. I expect the mean product attitude of the two groups to be different.

Hmm, I hadn’t considered surveys, but that’s a really good point! Many surveys explicitly label what each choice means, though I’m not sure how much people pay attention to those things.

Let me know how that study goes about the effect of priming in product reviews =)